Abstract

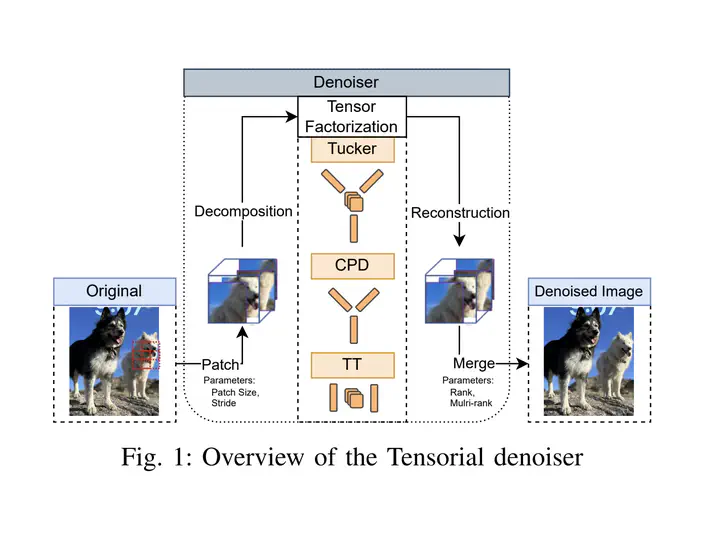

As machine learning techniques become increasingly prevalent in data analysis, the threat of adversarial attacks has surged, necessitating robust defense mechanisms. Among these defenses, methods exploiting low-rank approximations for input data preprocessing and neural network (NN) parameter factorization have shown potential. Our work advances this field further by integrating the tensorization of input data with low-rank decomposition and tensorization of NN parameters to enhance adversarial defense. The proposed approach demonstrates significant defense capabilities, maintaining robust accuracy even when subjected to the strongest known auto-attacks. Evaluations against leading-edge robust performance benchmarks reveal that our results not only hold their ground against the best defensive methods available but also exceed all current defense strategies that rely on tensor factorizations. This study underscores the potential of integrating tensorization and low-rank decomposition as a robust defense against adversarial attacks in machine learning.